Where synthetic data starts to break down

Synthetic data reflects the past, not the present

AI models learn from historical data. That means synthetic outputs tend to reproduce what is already known, rather than uncover emerging behaviours, cultural shifts or unmet needs.

As the MRS warns, synthetic data is inherently conservative, it smooths variation and weak signals, precisely the signals that often matter most in innovation, brand strategy and customer experience design (MRS).

Bias is not removed, it is scaled

Synthetic data inherits the biases of the data it was trained on. If certain audiences, behaviours or viewpoints are underrepresented in the original dataset, the synthetic version will reinforce those gaps.

Hall & Partners caution that synthetic data can amplify bias while appearing more objective, because the outputs are statistically coherent and confidently expressed (Hall & Partners).

The danger is not obvious error, it is credible distortion.

Human nuance is flattened

Real people contradict themselves. They struggle to articulate motivations. They change their minds mid-sentence. Context, emotion and social pressure all shape responses.

Synthetic data tends to remove this messiness. Marketing Week argues that while this can make insight easier to consume, it strips away the very human complexity that drives real-world behaviour (Marketing Week).

Clean data is not always truthful data.

Plausible answers are mistaken for insight

AI can generate answers that sound right. The problem is that plausibility is not validation.

Quirk’s highlights that synthetic respondents cannot surprise you, challenge assumptions or introduce genuinely new thinking, because they are constrained by what the model already “knows” (Quirk’s).

This creates a subtle but dangerous shift:

from discovering insight to confirming expectations.

The feedback-loop risk

In wider AI research, there is growing concern about models training on their own outputs, a phenomenon often referred to as model collapse. Over time, this leads to degraded accuracy and increasingly generic outputs (Wikipedia – Model Collapse).

In market research terms, this means organisations risk building strategies based on data that is progressively further removed from real customers.

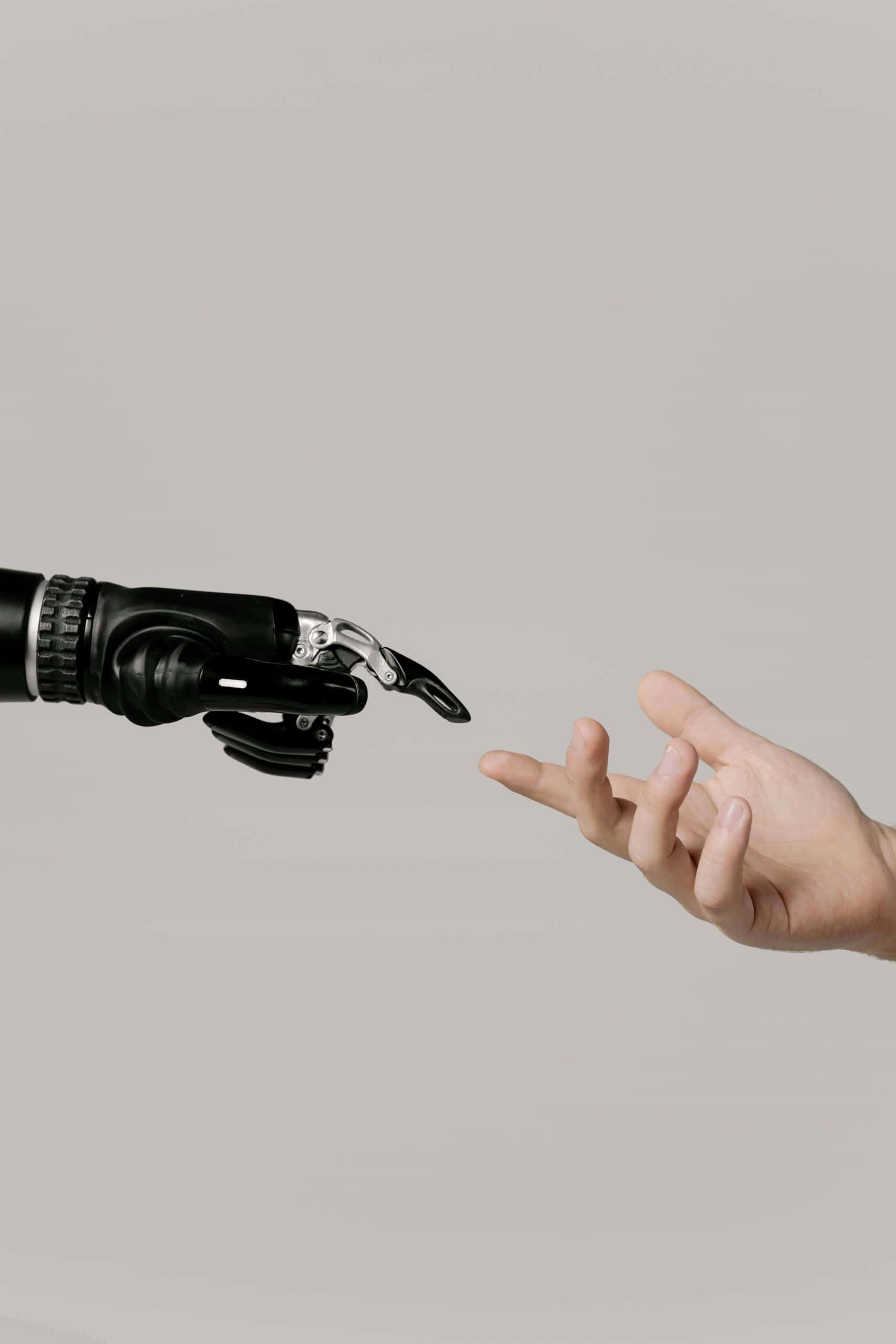

What this means for human-driven insight

The biggest risk is not technical, it’s cultural.

When AI produces instant answers, research teams can be pressured to move faster, question less, and accept outputs at face value. Marketing Week notes that this risks turning research into a justification tool rather than a learning discipline (Marketing Week).

Human researchers add value by:

- Framing better questions

- Interpreting contradiction

- Understanding context and culture

- Challenging internal bias

- Translating insight into judgement

Synthetic data cannot replicate those capabilities.

Where synthetic data does belong

Used responsibly, synthetic data can add value when it is clearly positioned as supporting evidence, not ground truth.

Most industry guidance agrees it is best suited to:

- Testing survey logic and wording (MRS)

- Early-stage hypothesis generation

- Scenario and sensitivity modelling

- Privacy-preserving analytics

- Directional exploration before real research

Kantar describes synthetic data as “a useful accelerant, but not a substitute for human understanding” (Kantar).

Trust, transparency and the future of AI research

Industry bodies are already responding. The ICC/ESOMAR Code updates emphasise transparency, disclosure and ethical responsibility in AI-driven insight work, particularly around synthetic data use (Economic Times).

The future of trusted market research will not be AI or humans. It will be organisations that:

- Clearly distinguish synthetic from real data

- Use AI to accelerate learning, not replace listening

- Anchor decisions in real human experience

- Maintain methodological and ethical transparency

The bottom line

Synthetic data is not inherently bad. But unquestioned synthetic insight is dangerous.

In a world where AI can generate answers instantly and where stories get unpacked, trust will belong to the organisations that know when to slow down, listen to real people, and apply human judgement.

Because in market research, the most valuable insight is not what a model predicts, but what people actually do, feel and struggle to articulate. At Vision One we trust human insight and are sceptical about the accuracy and trustworthiness of those that over rely on synthetic data.